The premise that changed everything: Annie Duke won millions playing poker at the highest levels. Her central argument in this book is not about poker — it is about how the rest of us make decisions as if outcomes depend entirely on our choices, when in reality, uncertainty governs almost everything. Once you accept that, decision-making becomes a fundamentally different discipline.

Thinking in Bets by Annie Duke

| Published | 2018 — Portfolio / Penguin |

| Pages | 288 |

| Category | Decision Making / Behavioral Psychology |

| Best for | Anyone who judges decisions by outcomes — which is almost everyone |

Why a poker player’s framework applies to everything

Annie Duke is not just a poker player. She holds a graduate degree in cognitive psychology, and she spent years studying decision-making under uncertainty before and during her poker career. The book draws on both — using the structure of poker (probabilistic outcomes, incomplete information, luck and skill intertwined) as a lens for understanding how decisions work in domains far removed from the card table.

The central insight is this: in most important decisions, we cannot know in advance what will happen. We are operating with incomplete information about a world that includes significant randomness. The question is not how to make decisions that guarantee good outcomes — that is impossible. The question is how to make decisions that are good given what we know at the time, and how to evaluate our decisions honestly rather than through the distorting lens of what happened afterward.

Read also: How I Think About Risk — And Why Most People’s Mental Model Is Quietly Costing Them

Lesson 01

Life is poker, not chess — uncertainty is not the exception, it is the operating condition

“Poker, like the best analogy for life, is a game of incomplete information. Chess is a game of complete information — both players see everything. Life isn’t chess.”

Chess is a game of perfect information. Both players see the entire board. Every possible move is, in principle, calculable. The best player — the one who calculates most accurately — wins. There is no luck in chess outcomes.

Poker is radically different. You never see your opponent’s cards. You cannot calculate the exact probability of each possible outcome because you don’t have complete information. Luck interacts with skill in every hand. A perfect play can lose. A terrible play can win. And the challenge is to make the best decision possible given what you know, while accepting that the outcome is not fully within your control.

Duke argues that most consequential real-world decisions are far more like poker than chess. The information is incomplete. Uncertainty is structural. Luck plays a role that cannot be eliminated. And the person who consistently makes better decisions will, over time, produce better outcomes — but any individual outcome can be dominated by factors they couldn’t control.

My takeaway: Accepting that uncertainty is the operating condition — not an obstacle to be overcome — changed how I evaluate both my decisions and their outcomes. The goal became making the best bet I could with available information, not finding the certainty that doesn’t exist.

Lesson 02

Resulting: the bias that makes you learn the wrong lesson from every decision

“Resulting is the tendency to judge the quality of a decision by the quality of its outcome. It’s the most common mistake in evaluating decisions.”

“Resulting” is Duke’s term for one of the most pervasive and damaging errors in decision evaluation: judging how good a decision was by what happened afterward. If the outcome was good, the decision was good. If the outcome was bad, the decision was bad.

This seems natural and even logical. But it is wrong in a world with significant randomness. A good decision can produce a bad outcome due to bad luck. A bad decision can produce a good outcome due to good luck. Judging decision quality by outcome quality — especially from a single event — conflates two things that need to be separated to learn from experience accurately.

Duke’s poker example is perfect: if you calculate that you have a 75% chance of winning a hand and bet accordingly, you will lose that hand 25% of the time. The 25% loss doesn’t mean your decision was wrong. It means you experienced the normal, expected variance. Judging the decision as bad because you lost is resulting — and it leads you to stop making a decision that is, in the long run, profitable.

My takeaway: I now actively separate my post-decision review into two questions: “Was the outcome good?” and “Was the decision good given what I knew at the time?” These questions often have different answers — and learning requires answering both, not just the first.

Read also: The Simplest Tool I Use to Make Every Tough Decision

Lesson 03

Beliefs are bets — and most of us hold them at the wrong confidence level

“We are not used to thinking of our beliefs as bets. But they are. Every time we act on a belief, we are betting that the belief is correct.”

Every belief we hold has an implicit confidence level — how strongly we believe it to be true. Duke argues that most people hold beliefs at either 0% (“that’s false”) or 100% (“that’s true”) when the honest confidence level is almost always somewhere in between.

This binary treatment of beliefs makes it impossible to calibrate them accurately over time. When you treat a belief as certain, contradicting evidence cannot update it — because certainty doesn’t have degrees. When you treat a belief as a probability estimate, you can update it incrementally as new evidence arrives.

The practical implication is significant: when you form a belief, attach a confidence percentage to it. “I’m 70% confident this business strategy will work.” “I’m 60% sure this person is reliable.” These numbers force you to acknowledge uncertainty and make updating far more natural when evidence changes.

My takeaway: I started verbalizing confidence levels in conversations and in my decision journal. “I think this is probably right — maybe 65%.” The practice is uncomfortable at first because it exposes uncertainty you’d rather not acknowledge. It is also far more accurate than the binary certainty most people project.

Lesson 04

Decision quality vs. outcome quality — the distinction that changes how you learn

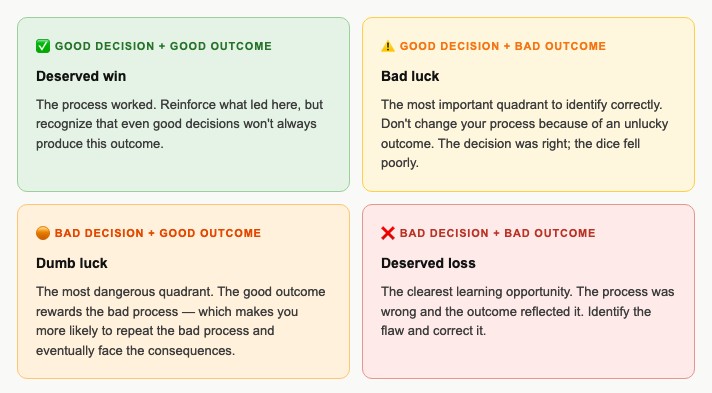

Duke provides a 2×2 framework that I have found practically indispensable for reviewing decisions honestly.

My takeaway: The “bad decision, good outcome” quadrant is where most bad habits are formed and reinforced. The good outcome signals that the decision was fine — even when the process was flawed. Catching this quadrant accurately is one of the highest-value things a decision reviewer can do.

Read also: Why You’re Learning from the Wrong Examples — and What to Study Instead

Lesson 05

Thinking in bets: calibrating your confidence to reality

“Thinking in bets means recognizing that there’s always a degree of uncertainty in every decision, and being honest about that uncertainty.”

The core practice Duke recommends is simple: when you hold a belief or make a decision, ask yourself “how much would I bet on this being true?” The amount you’d willingly wager is an honest measure of your actual confidence — more honest than any verbal statement, because it has real consequences.

Most beliefs we hold at apparent certainty would not survive this test. If someone offered you 10:1 odds that a belief you “know to be true” is actually false, would you take the bet? The hesitation before answering is the actual confidence level revealing itself.

Duke extends this to decisions: treating every decision as a bet — with an explicit probability of success and an explicit acknowledgment of what could go wrong — produces more honest analysis than the motivated reasoning most people apply to choices they’ve already emotionally committed to.

My takeaway: I now run a version of this test on significant beliefs before acting on them. “If I had to bet $1,000 on this being true, would I?” The forced concreteness of the question cuts through a remarkable amount of wishful thinking.

Lesson 06

The buddy system: using social accountability to think more accurately

“Being part of a group that rewards accuracy and penalizes resulting is one of the most powerful tools for improving your decision-making.”

Duke describes the informal accountability groups that serious poker players use — groups that meet to discuss decisions and outcomes, with a specific norm: the group evaluates decision quality, not outcome quality. Members are explicitly prevented from judging a decision as bad simply because it went poorly, or good simply because it worked out.

This norm — rewarding accuracy in process analysis rather than outcome narratives — is extraordinarily difficult to maintain without the structure of a group committed to it. On your own, resulting is almost irresistible. In a group with the right norms, resulting is called out before it can corrupt the learning.

Duke is candid that most social groups do the opposite — they reinforce resulting, validate whatever decision their friend made, and provide emotional support rather than analytical rigor. The value of a truth-seeking accountability group is precisely how unusual and uncomfortable it is.

My takeaway: I found one person — one — with whom I now do regular decision reviews using this framework. The conversations are uncomfortable in exactly the way that produces learning. Finding someone willing to tell you your decision process was flawed even when the outcome was fine is rare and genuinely valuable.

Read also: The Reason You’re Probably Wrong About Something Important Right Now

Lesson 07

Time travel: using your future self to make better present decisions

“Our future self is often wiser than our present self. Getting in touch with that future self can dramatically improve the decisions we make today.”

Duke introduces a technique she calls “10-10-10” (similar to the Suzy Welch framework) and extends it further: imagining your future self looking back at the decision, and also imagining a range of future selves across different possible outcomes.

The most powerful version she describes is what she calls a “premortem / pre-parade” combination. Before a decision, you run two mental simulations: one in which the decision goes badly (the premortem), and one in which it goes excellently (the pre-parade). Both simulations, when done honestly, reveal different kinds of useful information about what the decision requires and what it risks.

The pre-parade is often neglected — people are more comfortable with premortem thinking because it feels prudent. But imagining success clearly and specifically is also important, because it reveals what you’d need to do to get there and whether that path is genuinely achievable or mostly wishful.

My takeaway: I now run both simulations before any significant decision. The premortem surfaces risks. The pre-parade surfaces requirements and assumptions that need to be true for the best case to materialize. Together, they produce a more complete picture than either alone.

My honest assessment

Thinking in Bets is a book I return to more than most. Its central contribution — the resulting bias and the decision/outcome quality matrix — is genuinely useful and not widely understood outside of professional decision-making contexts. Duke writes clearly and doesn’t overextend the poker metaphor in ways that would make it feel strained.

Its weakness is some repetition in the middle sections and an occasionally uneven translation between poker strategy and everyday decision-making. But the core framework is sound and practically valuable in a way that holds up under use.

The one practice to take from this book

Start separating your post-decision reviews into two distinct questions: “How did this turn out?” and “Was my process good given what I knew?” Answer both, independently, before letting one influence the other.

That separation is the entire resulting bias solution in one practice — and it will change what you learn from experience more than almost anything else you can do.