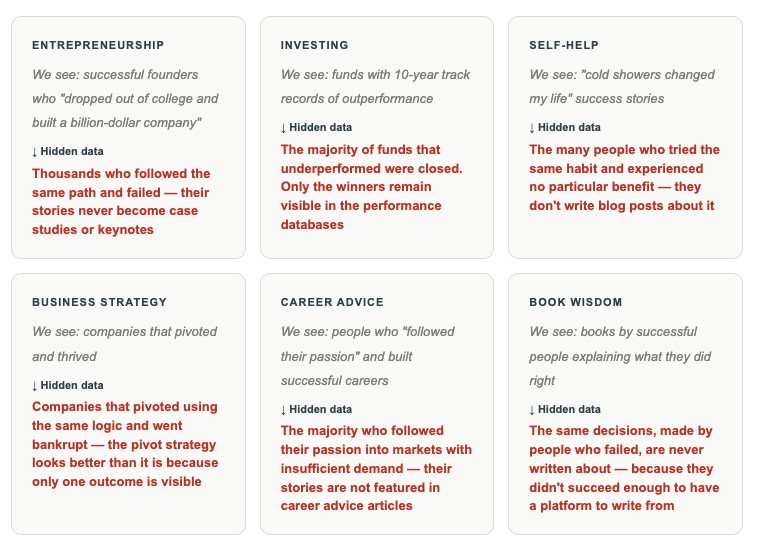

The problem with studying success: We learn from the people and businesses that made it. We read their books, follow their advice, and try to replicate their patterns. But we almost never study the thousands who followed the same patterns and failed. That gap — the systematic invisibility of failure — is survivorship bias. And it is quietly corrupting most of the lessons we think we’ve learned.

The WWII mathematician who saw what everyone else missed

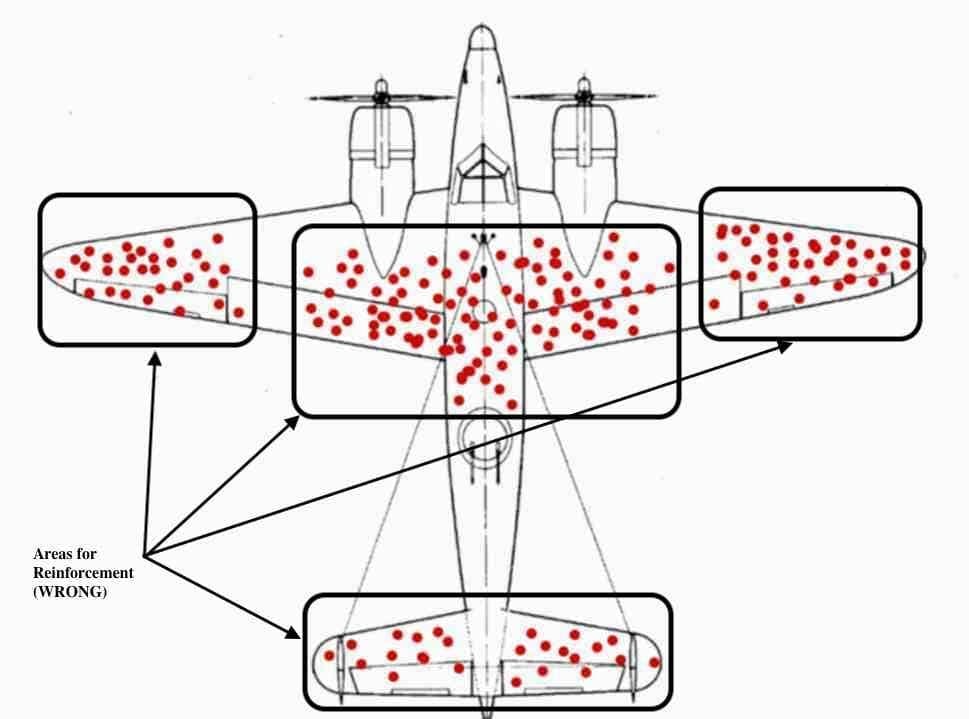

During World War II, the US military faced a problem: their aircraft were taking heavy fire and many were being shot down. To address this, they examined the planes that returned from missions and mapped where the bullet holes were concentrated. The logical conclusion seemed clear — reinforce the areas with the most bullet damage.

Then a Hungarian-born mathematician named Abraham Wald pointed out something that should have been obvious but wasn’t: the bullet holes on the returning planes showed exactly where a plane could be hit and still make it back. The places with no bullet holes weren’t unharmed — they were the places where a hit meant the plane didn’t return at all.

The military had been studying the wrong planes. The data they needed was in the planes they never got to examine — the ones at the bottom of the ocean. The armor needed to go on the engines and cockpits, not the wings. Wald’s insight saved lives and became one of the most elegant demonstrations of survivorship bias ever documented.

The lesson generalizes far beyond wartime aircraft. In almost any domain where we study outcomes, the data available to us is systematically skewed toward the survivors — the successes, the ones who made it back. The failures — often the majority — are invisible. And the lessons we draw from the visible data alone are frequently wrong.

Read also: How I Upgraded My Thinking with Adler’s 4 Levels of Reading

What survivorship bias actually is

Survivorship bias is a logical error that occurs when we focus on entities that passed through a selection process and ignore those that did not — typically because the failures are invisible or inaccessible. The result is a distorted picture of reality in which success appears more common, more replicable, and more causally explained than it actually is.

“The cemetery of silent failures is the place we should be studying. Instead, we build our lessons from the people standing in front of us — the ones who made it.”— Nassim Nicholas Taleb, The Black Swan

The bias operates through a simple mechanism: success is visible and failure is not. Successful companies are written about. Successful investors are profiled. Successful habits are shared. The companies that failed using the same strategy, the investors who followed the same approach and went bankrupt, the people who built the same habits and didn’t get the same results — they are silent. They write no books. They give no interviews. They produce no case studies for business schools.

We then mistake the visibility of success for evidence of its frequency and replicability. The pattern that produced success among the visible winners looks like a reliable recipe — because we’re not seeing the many people who followed it and lost.

Where it shows up — in business, investing, and everyday thinking

How survivorship bias distorted my own business decisions

I spent years studying successful businesses. I read case studies, listened to founder interviews, and built my mental model of “what works” almost entirely from examples of things that had worked. My library was full of books by successful entrepreneurs. My podcast queue was full of conversations with people who had built thriving companies.

Then someone asked me a question that disrupted the entire framework: “How many businesses tried exactly what you’re describing and failed? And why don’t you know?”

I didn’t know because I had never looked. And I had never looked because the failures weren’t in my information feed. They weren’t writing books. They weren’t giving keynotes. They weren’t in the case study databases.

The specific decision that crystallized this for me was around content marketing. I had read multiple accounts of founders who built significant businesses by producing free content consistently for years before monetizing. The pattern was compelling and the stories were vivid.

What I hadn’t accounted for was the base rate — how many businesses had followed the same strategy, produced content consistently for years, and then failed to build the audience or the monetization that the success stories described. I had no idea. And without that denominator, the numerator — the success stories — was meaningless as a guide to probability.

Read also: How I Learned to Solve Complex Problems in a Non-Linear World

Why survivorship bias is so hard to see

The bias is structurally self-concealing in a way that makes it unusually difficult to correct. The problem is not that we’re ignoring information we can see. The problem is that the information we most need — the failure data — is largely inaccessible.

Failed companies don’t produce legacy case studies. Failed investors don’t have performance databases maintained over time. Failed creators don’t build platforms with large audiences. The selection process that determines what information reaches us is systematically biased toward success — which means the world looks more predictable, more replicable, and more meritocratic than it actually is.

There is also a psychological amplifier: success stories are more emotionally compelling than failure stories. They produce hope and motivation. They feel instructive. Failure stories feel discouraging — and so even when they are available, they tend to receive less attention and are less readily recalled. The bias in our information environment combines with a bias in our attention to produce a picture of reality that is significantly more optimistic than the data warrants.

How to correct for it — practically and specifically

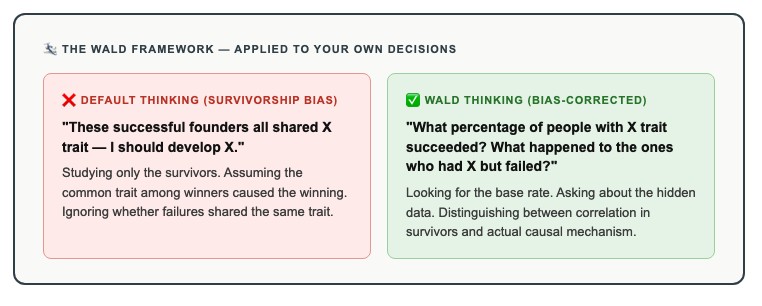

- Always ask for the base rate When you encounter a success story, the first question to ask is: “Of all the people who tried this approach, what percentage succeeded?” If you can’t find that number — and often you can’t — treat the success story as an existence proof, not a probability estimate. It shows the outcome is possible. It tells you nothing about how likely it is.

- Actively seek failure cases For any strategy or approach you’re considering, deliberately search for cases where it failed. Not to confirm pessimism, but to understand the conditions under which the strategy works and those under which it doesn’t. Failure cases are harder to find — but they exist, and they are almost always more instructive than the success cases.

- Distinguish correlation from causation in survivor data Just because successful people share a trait does not mean that trait caused the success. Many unsuccessful people share the same trait. Ask: is this trait common among the failures too? If the answer is yes, the trait is not the differentiator — you’re observing a correlation in a biased sample.

- Weight firsthand failure accounts more heavily than you naturally would When you encounter an account of failure — a business that didn’t work, a strategy that backfired, a career path that didn’t pan out — pay it deliberate extra attention. Our natural tendency is to weight success stories more heavily because they’re more motivating. Correcting for this means deliberately counterweighting toward the data we’re structurally inclined to undervalue.

- Ask “who is not in this room?” This is the most generalizable formulation of the Wald insight. In any sample of evidence you’re examining — a book, a case study, a conference, a peer group — ask who is systematically absent and why. The people not in the room often carry the most important information about what doesn’t work.

Read also: How Small Actions Trigger Massive Change

Conclusion: the most important data is usually the data you can’t see

Abraham Wald’s insight about the WWII planes was not complicated. The data needed was simply the data that wasn’t there — the planes that didn’t come back. Once he asked about the absent data, the answer was obvious. The challenge was recognizing that the absent data existed and mattered.

The same challenge applies to almost every domain where we try to learn from outcomes. The information available to us is systematically skewed toward the survivors. The lessons drawn from that information alone are systematically skewed toward overestimating how replicable and predictable success is.

Correcting for this doesn’t mean becoming pessimistic or refusing to learn from success. It means holding success stories more lightly, asking for base rates more consistently, and deliberately seeking the failure data that survivorship bias has made structurally invisible.

The question to ask before any decision based on success stories

“What would I need to know about the people who tried this and failed — and where would I find that information?”

If the honest answer is that you can’t find it — treat the success stories you have as partial evidence only. Motivating, possibly instructive, but incomplete in a way that matters before you act on them.