The most dangerous bias is the invisible one: Confirmation bias doesn’t feel like a bias. It feels like pattern recognition, careful analysis, and justified confidence. That’s exactly what makes it so destructive. By the time you realize it’s running your thinking, it’s already made several important decisions for you.

The business case that taught me what I wasn’t seeing

Several years ago I was evaluating a business opportunity — a new market I was considering entering. I researched it thoroughly. I spoke to people in the industry. I analyzed the competitive landscape. I built a financial model. After several weeks of work, my conclusion was clear: the opportunity was real, the timing was good, and I should move forward.

A trusted friend who had more experience in adjacent markets asked me a simple question: “Who did you talk to who thought this was a bad idea?”

I realized, with a clarity that was genuinely uncomfortable, that I hadn’t spoken to a single person who was skeptical of the opportunity. Every conversation I had sought out was with people who were enthusiastic about the market. Every article I had read was one I had found by searching for evidence that the opportunity existed. Every model assumption I had made was optimistic.

I had not conducted research. I had conducted a confirmation exercise dressed up as research. My conclusion was predetermined — what I was looking for was permission, not truth.

That experience introduced me, properly, to confirmation bias. Not as an abstract cognitive psychology concept but as a live, active, expensive force in my actual decision-making.

Read also: Why Smart People Think One Step Further Than Everyone Else

What confirmation bias actually is — and isn’t

Confirmation bias is the tendency to search for, interpret, favor, and recall information in a way that confirms or supports one’s existing beliefs or values. It was formally identified by English psychologist Peter Wason in 1960 through a series of elegant experiments and has since been one of the most replicated findings in cognitive psychology.

It is important to understand what confirmation bias is not: it is not simply believing wrong things. Everyone believes some wrong things. Confirmation bias is the process by which wrong beliefs become self-reinforcing — the mechanism that makes them resistant to update regardless of contradicting evidence.

“The human mind is a story processor, not a logic processor. Most people, when confronted with evidence that challenges their beliefs, do not change their beliefs. They change the evidence.”— Jonathan Haidt, The Righteous Mind

This is what makes confirmation bias categorically different from simply being wrong. A belief held without this bias is, in principle, correctable by new information. A belief held within the grip of confirmation bias is not — because every new piece of information gets processed through a filter that preserves the belief.

How it works in the brain

Confirmation bias operates through three distinct mechanisms that often work simultaneously:

Selective search

When we seek information, we tend to search for evidence that confirms what we already believe. A person who thinks a particular investment is promising tends to search for reasons it will succeed — not reasons it might fail. This produces information asymmetry: we encounter far more confirming evidence than disconfirming evidence, not because disconfirming evidence doesn’t exist, but because we don’t look for it.

Selective interpretation

When we encounter ambiguous evidence — information that could support either conclusion — we tend to interpret it in the direction of our existing belief. The same economic data looks bullish to the optimist and bearish to the pessimist. The interpretation is not neutral; it is processed through the lens of what we want to find.

Selective recall

We remember evidence that confirmed our beliefs more vividly and more accurately than evidence that challenged them. This means the bias compounds over time: even in cases where we were exposed to balanced information, we reconstruct a memory that is skewed toward confirmation. The belief strengthens not because the world provided more supporting evidence, but because memory provided a selectively edited version of what we encountered.

Read also: How I Built a Reading System That Actually Retains Knowledge

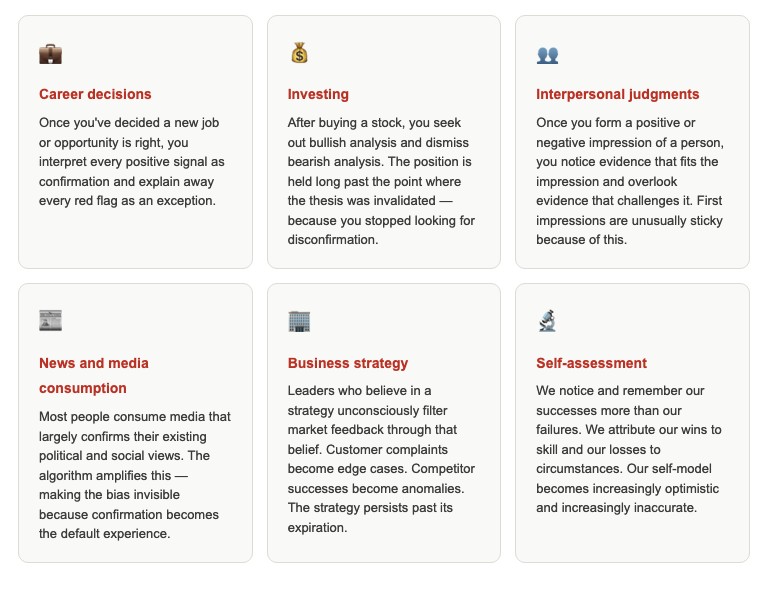

Where it shows up in your life right now

Why smart people are more vulnerable, not less

This is the part that is genuinely surprising and worth understanding clearly: higher intelligence does not protect against confirmation bias. In several important ways, it amplifies it.

This phenomenon — sometimes called “motivated reasoning” or the “smart idiot effect” in the academic literature — has been documented across multiple studies. The finding is consistent: people with higher cognitive ability are better at constructing justifications for their existing beliefs. They are more skilled at finding flaws in arguments that challenge them. They are more articulate in explaining why the evidence against them is wrong.

Intelligence, in other words, is a tool — and like any tool, it can be used in the service of truth-seeking or in the service of belief-protection. Confirmation bias tends to commandeer the tool for the second purpose without the person noticing.

⚠️ The uncomfortable implication

If you are reading this and thinking “I understand confirmation bias, so I’m probably less susceptible to it than most people” — that thought is itself a manifestation of the bias it describes.

Awareness of a bias reduces its impact, but does not eliminate it. Kahneman himself, who spent his career studying cognitive biases, reports being frequently subject to them despite knowing precisely how they work.

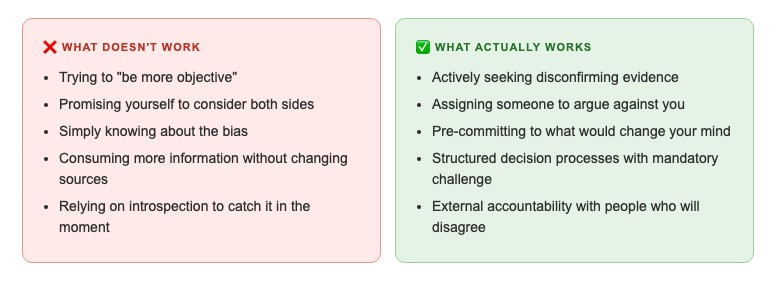

Five antidotes that actually work

- Actively seek disconfirming evidence — make it a formal step For any significant belief or decision, spend dedicated time — not just willingness — searching specifically for the strongest arguments against your position. Not to find balance, but to find the best case against you. If you can’t articulate the opposing case better than its proponents, you don’t understand the issue.

- Use a “steel man” instead of a straw man A straw man is a weak version of the opposing argument. A steel man is the strongest possible version. Before dismissing a contradictory view, ask: what is the most compelling version of this argument? Can you state it so well that its proponents would agree that’s their position? If not, you haven’t actually engaged with the challenge.

- Assign a dedicated “challenger” role In any important decision involving other people, formally assign one person the role of arguing against the dominant view — regardless of their personal opinion. This institutionalizes challenge and removes the social pressure that makes disagreement feel like disloyalty. The goal is not to be contrarian but to surface the case that confirmation bias would otherwise suppress.

- Pre-commit to falsification criteria Before committing to a belief or decision, write down: “I will revise or abandon this belief/decision if I discover X.” This creates a pre-committed standard for updating — one established before the emotional investment deepens, when the standard is more likely to be genuinely falsifiable.

- Audit your information diet Who challenges your views regularly? What sources do you read that disagree with your priors? If your honest answer is “very few” or “none,” your information environment is actively feeding confirmation bias. Not all disagreement is valuable — but the absence of it almost always is costly.

Read also: How Thinking Backwards Solved Problems I’d Been Stuck on for Years

How I practice actively disconfirming my beliefs

Knowing the antidotes and practicing them are different things. Here is what I have actually built into my regular thinking process.

For any significant belief I hold — about my work, my investments, my capabilities, or any situation I’m evaluating — I maintain what I call a “challenge file”: a running document of the strongest arguments against my current position on that question. I update it when I encounter genuinely challenging perspectives. I read it before making decisions in the relevant area.

For decisions specifically, I use the pre-commitment question I described in the decision journal article: “What information, if I encountered it, would cause me to change my position?” I write the answer before the decision. If I can’t answer it, that is a signal that I have already closed myself to update — which is itself important information.

And I maintain at least one relationship with someone whose opinion I respect and whose views frequently differ from mine — someone who will tell me directly when they think I’m wrong and whose challenge I take seriously rather than explain away. This is harder to build than a process or a document. It is also more valuable.

✅ The question I ask before any significant commitment

“What would I need to see to change my mind about this? And have I actually looked for it?”

If the honest answer to the second part is no — the commitment gets paused. Not indefinitely. But until I have genuinely looked for the case against the position I’m about to act on.

That pause has prevented more bad decisions than any other single practice in my toolkit.

Conclusion: the belief worth interrogating most is the one you’re most certain of

Confirmation bias is the cognitive bias most likely to be operating on your most important beliefs right now — because it is most powerful precisely where you feel most certain. The strength of your conviction is not evidence of the quality of your reasoning. It may be evidence of how thoroughly confirmation bias has had time to operate.

The antidote is not doubt for its own sake. It is disciplined, structured, specific inquiry into the case against your current position — conducted not to reach a different conclusion, but to ensure the conclusion you hold has survived genuine challenge.

The business case I described at the start of this article eventually failed, for exactly the reasons that a thorough challenge would have surfaced. I proceeded anyway because I had confused the feeling of certainty with the reality of correctness.

Those two things are not the same. A decision journal, a challenge file, and one honest conversation with a skeptic might have revealed the difference. The cost of not doing that work was significant and completely avoidable.

Start with one belief you currently hold with high confidence. Find the best case against it. Read it carefully. See what it does to your certainty level.

If it does nothing — that, too, is data worth having.