Introduction: The Link Between Thinking and Outcomes

In the rigorous study of systems and strategic behavior, a persistent fallacy continues to obscure our understanding of success: the tendency to judge the quality of a decision solely by the quality of its immediate outcome. This phenomenon, often termed “resulting,” suggests a direct, deterministic link between an actor’s intent and the eventual state of reality. However, after years of analyzing decision-making processes in high-stakes environments—ranging from capital allocation in financial markets to the trajectory of complex career paths—I have observed that this link is fundamentally probabilistic rather than deterministic.

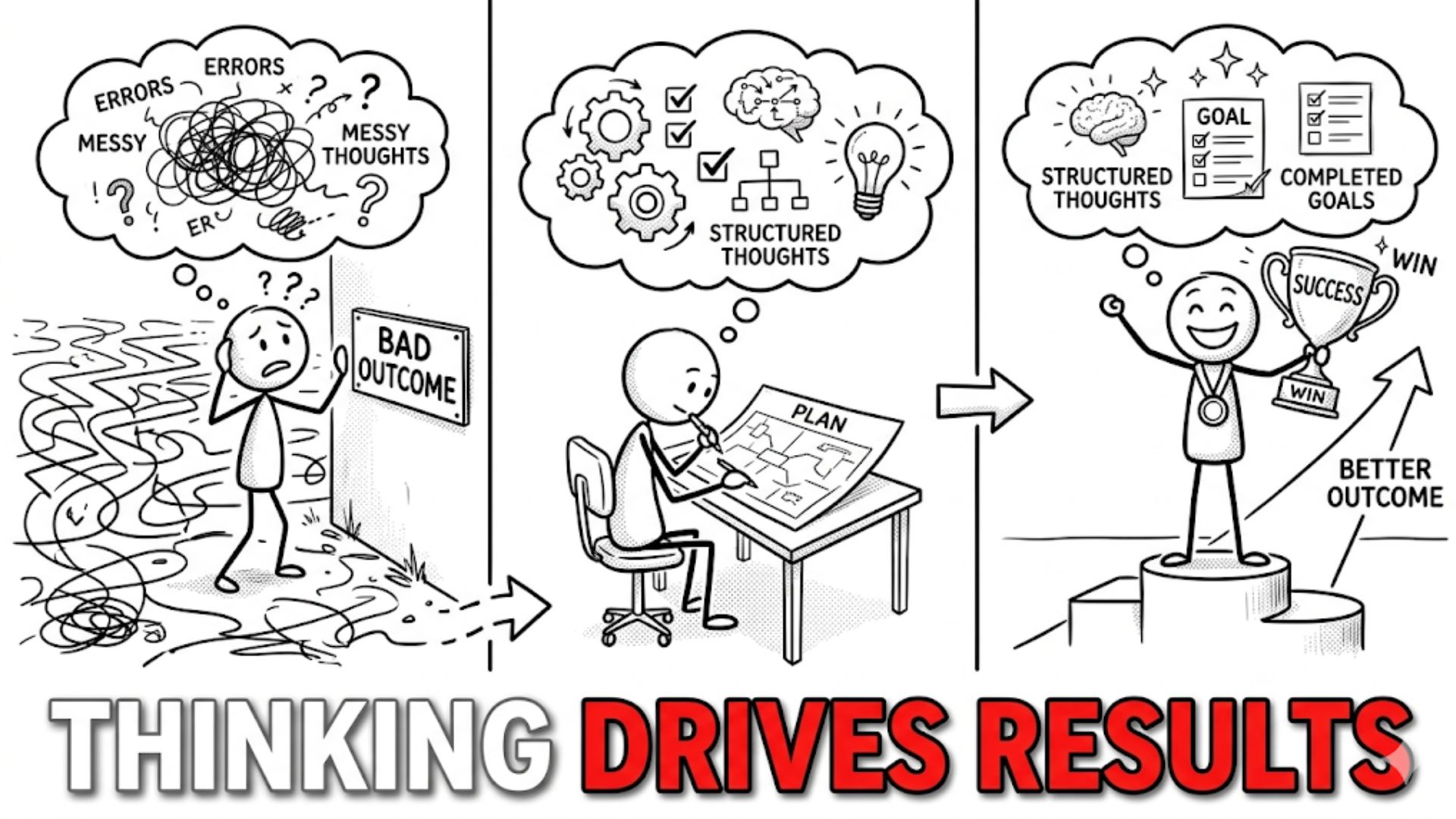

Outcomes are the product of two distinct variables: the quality of the decision-making process and the influence of environmental randomness, or variance. While we cannot command the latter, we have total agency over the former. Better thinking is not a talisman that guarantees success in every individual instance; rather, it is a structural advantage. It is an optimized cognitive architecture that, over a sufficiently large sample size of decisions, shifts the distribution of results toward more favorable outcomes. To understand how better thinking leads to better results, one must move beyond the superficial analysis of “wins” and “losses” and investigate the underlying causal mechanisms: the reduction of systematic error, the alignment of internal models with external reality, and the rigorous management of uncertainty.

Read also: A Deep Anthropological Review of Simon Sinek’s Leaders Eat Last

Outcomes vs. Decision Quality

A fundamental requirement for intellectual rigor is the decoupling of decision quality from outcome quality. In a stochastic environment—where information is incomplete and the future is not a linear projection of the past—it is entirely possible to make a technically “perfect” decision and still experience a catastrophic result. Conversely, one can make an abysmal decision and be rewarded with a spectacular outcome due to pure luck.

Consider a professional poker player who “goes all-in” with a 98% statistical advantage but loses the hand because the 2% event occurs. To an untrained observer, the loss is a failure. To a systems thinker, the decision was correct because the expected value was overwhelmingly positive. Evaluating thinking quality requires an “epistemic humility” that prioritizes process over resulting. If we judge ourselves by outcomes alone, we risk reinforcing “bad” processes that were merely lucky and abandoning “good” processes that were momentarily unlucky. Better thinking involves the creation of a “pre-mortem” and “post-mortem” culture where the logic, data, and assumptions used at the time of the decision are the primary metrics of evaluation, regardless of the noise produced by randomness.

Bounded Rationality and Cognitive Limits

The structural constraints on human thinking were famously codified by Herbert Simon through the concept of bounded rationality. Traditional economic models often assume “Homo Economicus”—an agent with infinite computational power and perfect information. Simon correctly argued that human beings are “satisficers”; we have limited cognitive bandwidth, finite time, and incomplete data. We do not optimize for the “best” possible outcome; we search for a “good enough” solution that satisfies our immediate constraints.

This bounded nature of our rationality is the primary source of decision error. Because we cannot process every variable in a complex system, we rely on mental shortcuts or heuristics to reduce cognitive load. While these heuristics are evolutionarily efficient for survival, they are often ill-suited for the abstract, non-linear problems of modern business and finance. Better thinking acknowledges these structural boundaries not as flaws to be ignored, but as parameters to be managed. By recognizing our cognitive “limiters,” we can implement external systems—checklists, external audits, and formal models—to expand the bounds of our rationality and reduce the reliance on gut intuition, which is often a mask for unrecognized biases.

Read also: The Hidden Distribution of Breakthrough Ideas

Cognitive Biases and Systematic Errors

Building on the foundation of bounded rationality, the work of Daniel Kahneman and Amos Tversky identified that human error is not random; it is systematic. Our “System 1” thinking (fast, intuitive, emotional) frequently hijacks “System 2” (slow, analytical, logical), leading to predictable deviations from reality known as cognitive biases.

Several key biases structurally impair outcome quality:

- Overconfidence: The tendency to overestimate our own knowledge and the precision of our forecasts. This leads to inadequate margins of safety in financial and strategic planning.

- Availability Bias: The inclination to overweight information that is most recent or emotionally salient, rather than statistically significant.

- Loss Aversion: The psychological reality that the pain of a loss is twice as potent as the joy of an equivalent gain. This bias often leads to “disposition effects,” where investors hold onto losing assets too long in hopes of breaking even, thereby increasing their total downside risk.

- Anchoring: Being disproportionately influenced by the first piece of information encountered, even if it is irrelevant to the decision at hand.

Better thinking is a process of “de-biasing.” It involves creating a mental “firewall” between emotional impulse and strategic action. By studying these systematic errors, an analyst can anticipate where their own thinking is likely to fail and install “nudges” or constraints to counteract the bias before it manifests as a sub-optimal outcome.

Mental Models and Structured Thinking

If heuristics are the “shortcuts” of thought, mental models are the “latticework.” As popularized by Charlie Munger, high-quality thinking is the result of possessing a broad array of models from various disciplines—physics, biology, psychology, engineering, and economics—to interpret reality.

A single-model approach is a structural vulnerability. If you only think like an economist, you may miss the psychological drivers of a market bubble or the biological reality of an aging population. Better outcomes are achieved through “multi-disciplinary synthesis.”

- Entropy (Physics): Understanding that without constant energy and organization, systems naturally trend toward disorder.

- Evolutionary Biology: Recognizing that competition and adaptation are the primary drivers of industry change.

- Redundancy (Engineering): Building “margin of safety” into financial models to survive black swan events.

By layering these models, a thinker creates a more accurate “map” of the territory. The closer the mental map aligns with the actual terrain of reality, the lower the probability of a “navigational error” that leads to a poor strategic outcome.

Read also: What I Learned About Leading Without Having All the Answers

Time Horizons and Outcome Quality

One of the most persistent drivers of poor outcomes is temporal myopia—the over-valuation of the immediate future and the under-valuation of the long term. Short-term thinking is structurally incentivized in many systems: quarterly earnings, annual performance reviews, and the dopamine hit of social media validation.

However, many of the most valuable processes in reality follow a non-linear, compounding curve. Compounding is the “eighth wonder of the world,” but it requires time as its primary input. Better thinking aligns decisions with the “appropriate time horizon” of the system. In investing, this means thinking in decades rather than days. In career development, it means prioritizing “career capital” and skill acquisition over immediate title or salary increases.

By extending the time horizon, a thinker gains an “arbitrage” advantage. Most market participants are optimizing for the next 90 days. If you can optimize for the next 10 years, you are essentially competing in a less crowded, more rational market. Time horizon alignment reduces the “noise” of short-term variance and allows the “signal” of compounding to dominate the final result.

Feedback Loops and Learning Systems

Improvement in thinking quality is not a static achievement but a dynamic process of calibration. This is governed by feedback loops. A feedback loop exists when the output of a system (the outcome) is returned to the input (the decision process) to refine future actions.

For a feedback loop to be effective, it must be:

- Accurate: The outcome must be correctly attributed (e.g., did we win because of skill or luck?).

- Timely: Feedback that arrives too late cannot influence the next decision cycle.

The difficulty in high-complexity domains like business strategy or macro-investing is that feedback loops are often “loose” and delayed. A CEO may make a strategic decision today that doesn’t show its true result for five years. By the time the feedback arrives, the environment has changed, and the causal link is obscured. Better thinking involves the creation of “synthetic” feedback loops—keeping a “decision journal” to track assumptions in real-time and using “pre-mortems” to simulate failure before it occurs. High-quality learning systems are designed to minimize the “learning lag,” allowing for more rapid calibration of the internal model.

Read also: Structural Mechanisms of Asymmetric Discovery

Second-Order Effects and Strategic Thinking

First-order thinking is simplistic: “If I do $X$, then $Y$ will happen.” Second-order thinking asks: “And then what?”

Most “easy” solutions to complex problems are first-order successes that result in second-order disasters. Consider the introduction of a rent-control policy.

- First-Order Effect: Rent is cheaper for current tenants.

- Second-Order Effect: Developers stop building new housing due to capped returns; landlords stop maintaining buildings; the total supply of housing drops, leading to an eventual housing crisis that is worse than the original problem.

Better outcomes are the result of tracing the causal chain as far as possible. A systems thinker understands that every action has a reaction, and in a complex system, the “side effects” often become the “main effects” over time. Decision quality is measured by the ability to anticipate these downstream consequences. As the economist Frederic Bastiat noted, the difference between a bad economist and a good one is that the bad one focuses on “what is seen,” while the good one accounts for both “what is seen and what must be foreseen.”

Incentives and Thinking Quality

No analysis of thinking quality is complete without a study of incentives. As Charlie Munger famously remarked, “Never, ever, think about something else when you should be thinking about the power of incentives.”

Incentives act as a “gravitational pull” on cognition. Even highly intelligent individuals will produce poor thinking if they are incentivized to do so. This is the Principal-Agent Problem. If a corporate manager is incentivized based on this year’s stock price, they will naturally favor decisions that pump the price in the short term, even if it compromises the company’s decade-long solvency.

High-quality thinking requires an “incentive audit.” One must ask: “What are the structural rewards of this environment?” If the incentives are misaligned with reality, the thinking will inevitably become “motivated reasoning”—the process of starting with a desired conclusion (that serves the incentive) and reverse-engineering a logic to support it. Better outcomes require the structural alignment of incentives with the desired long-term results.

Read also: Why Winning in Business Is the Wrong Goal

Variance, Randomness, and Outcome Noise

A rigorous thinker must accept the existence of “Outcome Noise.” In any system with more than a few variables, randomness plays a significant role in the final state. This is the difference between a “closed system” (like a clock) and an “open system” (like a weather pattern or a global economy).

The presence of noise means that there is a “gap” between thinking quality and outcome quality. This gap is Variance.

Outcome = Skill + Luck

In the short term, luck dominates. In the long term, skill (thinking quality) dominates because luck tends to mean-revert to zero over time, while the edge provided by better thinking compounds.

Better thinking allows one to “filter” the noise. It prevents the psychological error of “over-reacting” to a random win or a random loss. By understanding the statistical nature of reality, a thinker can maintain a steady course during periods of negative variance, knowing that if the $EV$ is positive, the Law of Large Numbers will eventually deliver the reward.

Compounding Decision Quality Over Time

The relationship between thinking and outcomes is not additive; it is multiplicative. A $1\%$ improvement in decision quality does not lead to a $1\%$ improvement in lifetime wealth or career success. It leads to a disproportionately larger outcome because decisions are “stacked.”

Each decision sets the “initial conditions” for the next one. A better decision today puts you in a better position to make an even better decision tomorrow. This is Path Dependency. Over twenty years, a person who makes decisions that are slightly more aligned with reality than their peers will find themselves at a vastly different destination.

This is the “Matthew Effect”: “For to everyone who has, more will be given.” In the realm of cognition, to those who have better models, more accurate outcomes will be given. The cumulative advantage of slightly better thinking—repeated thousands of times—is the primary mechanism behind the extreme “power-law” distributions we see in human success.

Read also: Why Experimentation Functions as the Primary Engine of Innovation

Why Better Thinking Does Not Guarantee Success

It is a mark of intellectual maturity to admit that even the highest-quality thinking does not guarantee success. We must distinguish between “maximizing probability” and “ensuring results.”

The universe is characterized by “deep uncertainty.” There are “unknown unknowns”—events that are not even on our probability distribution. A meteorite hitting the Earth or a global pandemic are events that can render even the most sophisticated strategic plan irrelevant. Better thinking is about robustness and antifragility. It is about building systems that can survive being “wrong” and thrive in the face of volatility.

However, one must still “pay the toll” to randomness. You can do everything right and still lose. Accepting this is not an admission of defeat, but an alignment with the true nature of reality. The goal is not to be a prophet who predicts the future perfectly, but to be an “actuary” who understands the odds and plays them consistently.

Viewing Outcomes Through Thinking Systems

This perspective shifts the focus from the ego to the process. It fosters a “growth mindset” because it suggests that the “limiter” on our outcomes is the quality of our mental software, which can be updated and refined. By viewing outcomes through the lens of decision-making frameworks, we achieve a level of emotional stability that is required for long-term survival in volatile systems. We stop “chasing” outcomes and start “perfecting” the engine that produces them.

Conclusion: Thinking as a Structural Advantage

In the final analysis, better thinking is the ultimate competitive moat. While effort, talent, and capital are essential inputs, they are ultimately limited by the quality of the “governor” that directs them. In uncertain, complex systems, higher-quality thinking functions as a structural advantage that quietly and relentlessly shifts the probabilities of success.

By internalizing the lessons of bounded rationality, correcting for systematic biases, employing a multi-disciplinary latticework of mental models, and embracing probabilistic reasoning, we align our internal maps with the external terrain. This alignment does not eliminate the noise of randomness, but it ensures that over the long duration, the signal of skill dominates the final result. Outcomes may be probabilistic, but the quality of the process is the only variable that determines whether the “house” has the edge or the player does. In the long game of life, the advantage always goes to the one with the better model of reality.