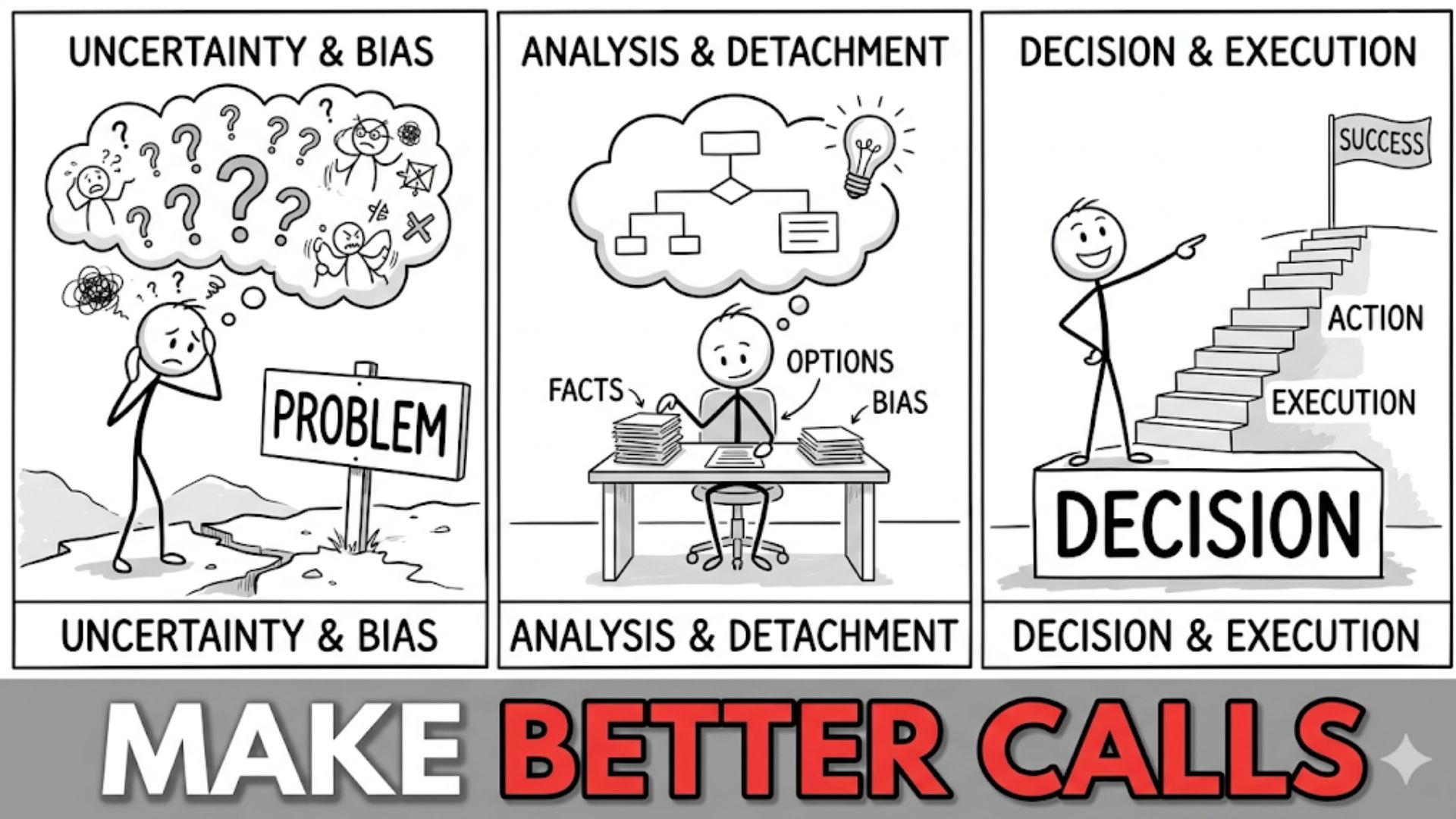

Introduction: Judgment as a Structured Process

In the analysis of human achievement and institutional durability, “good judgment” is frequently invoked as a nebulous, almost mystical quality. It is commonly attributed to a combination of innate intuition, seasoned experience, or a vague “feel” for the right path. However, after years of analyzing the mechanics of choice in high-stakes environments—ranging from capital allocation in volatile markets to the long-term trajectory of professional careers—I have found that this interpretation is structurally insufficient.

Judgment is not a singular trait, nor is it a synonym for intelligence. Rather, I observe that high-quality judgment is a structured cognitive process. It is an emergent property of a complex internal system designed to process information, assess probabilities, and calibrate beliefs under conditions of pervasive uncertainty. To understand the structure of good judgment, one must move beyond the anecdotal and investigate the underlying architecture of decision-making. This architecture is built upon specific, interacting components: probabilistic reasoning, an awareness of cognitive boundaries, the rigorous application of mental models, and a structural alignment with long-term consequences. When these components function in concert, they shift the distribution of outcomes toward more favorable results, not through a single moment of inspiration, but through a systematic reduction of error.

Judgment vs. Outcome

A foundational error in the evaluation of judgment is “resulting”—the tendency to judge the quality of a decision based solely on its outcome. In my study of probabilistic systems, I find that the link between a decision and its result is frequently decoupled by the presence of randomness or “noise.” A high-quality judgment can lead to a catastrophic outcome due to a “tail risk” event, while a poorly reasoned, impulsive choice can be rewarded with success through pure chance.

The structural analysis of judgment requires an analytical separation between process and result. Good judgment is defined by the quality of the reasoning at the time the decision was made, given the information available and the uncertainties involved. Evaluating judgment solely by its outcome is not only intellectually lazy but strategically dangerous; it reinforces “lucky” but flawed processes and discourages “unlucky” but rigorous ones. To improve judgment quality, we must prioritize the “epistemic audit”—an examination of the logic, data, and probabilistic assessments that formed the decision—rather than the terminal state of the world. In the long duration, a superior process will almost always yield superior aggregate outcomes, but the short-term variance makes the outcome a noisy signal of judgment quality.

Read also: How Better Thinking Leads to Better Outcomes

Bounded Rationality and Cognitive Constraints

To understand the limits of judgment, we must start with the principle of bounded rationality, as introduced by Herbert Simon. Traditional economic models often posit a “rational actor” with infinite computational power and perfect information. In my observation of real-world systems, this actor does not exist. Human beings operate within significant cognitive constraints: limited information, finite time, and a restricted capacity to process complex, multi-variable environments.

Because of these constraints, we do not optimize; we “satisfice.” We seek solutions that are “good enough” given our immediate limitations. This bounded nature of our rationality is the primary source of judgment error. When the complexity of an environment exceeds the processing capacity of the decision-maker, the system reverts to simplified heuristics. While these mental shortcuts are evolutionarily efficient for survival in low-complexity environments, they often fail in the abstract, non-linear domains of modern finance or strategic planning. Good judgment, therefore, begins with a rigorous awareness of one’s own cognitive boundaries. It involves the implementation of external systems—checklists, external red-teaming, and quantitative models—designed to expand the bounds of our rationality and compensate for our inherent processing limits.

Cognitive Biases and Judgment Errors

Building upon the framework of bounded rationality, the research pioneered by Daniel Kahneman and Amos Tversky identified that human error is not random but systematic. Our cognitive architecture is prone to specific “biases” that distort judgment in predictable ways. In my analysis of institutional failures, I have seen these biases function as structural defects in the decision-making engine.

Several biases are particularly corrosive to judgment:

- Overconfidence: The tendency to overestimate the precision of our knowledge and the probability of our success. This leads to inadequate “margins of safety” in financial and strategic planning.

- Anchoring: A disproportionate reliance on the first piece of information encountered, even if it is irrelevant to the current decision.

- Availability Bias: The inclination to overweight information that is most recent, emotionally salient, or easily recalled, rather than that which is statistically significant.

- Confirmation Bias: The active search for information that validates our existing beliefs while ignoring or discounting contradictory data.

Good judgment requires a systematic “de-biasing” process. It is not enough to simply be “aware” of these biases; high-quality judgment incorporates structural constraints that prevent these biases from influencing the final output. This involves creating an “inner scorecard” and seeking out disconfirming evidence as a standard operating procedure.

Read also: A Deep Anthropological Review of Simon Sinek’s Leaders Eat Last

Probabilistic Thinking and Uncertainty

At its core, judgment is the management of uncertainty. Most professionals operate under the illusion of certainty, using binary language (Yes/No, Will/Won’t) to describe future states. In my study of decision-making, I find that the transition from binary to probabilistic thinking is the hallmark of superior judgment.

Mental Models and Structured Reasoning

Superior judgment is rarely the result of “siloed” knowledge. Instead, I observe that the most effective decision-makers use a “latticework” of mental models, a concept popularized by Charlie Munger. These models are foundational concepts from diverse disciplines—physics, biology, psychology, engineering, and economics—that can be applied to simplify and interpret complex systems.

When we rely on a single model (e.g., only thinking like an economist or a marketer), we develop a structural blind spot. High-quality judgment requires a multidisciplinary approach:

- Entropy (Physics): Recognizing that without constant input and organization, systems naturally trend toward disorder.

- Incentives (Economics): Understanding that “the person who has the hammer sees every problem as a nail.”

- Redundancy (Engineering): Building in backups and margins of safety to survive unexpected shocks.

- Evolutionary Biology: Recognizing the power of adaptation and the selection pressures of a competitive market.

By layering these models, a decision-maker can “triangulate” reality. If multiple models from different disciplines point toward the same conclusion, the confidence in that judgment increases. If they conflict, it indicates a high degree of “epistemic risk” that requires further investigation.

Time Horizons and Judgment Quality

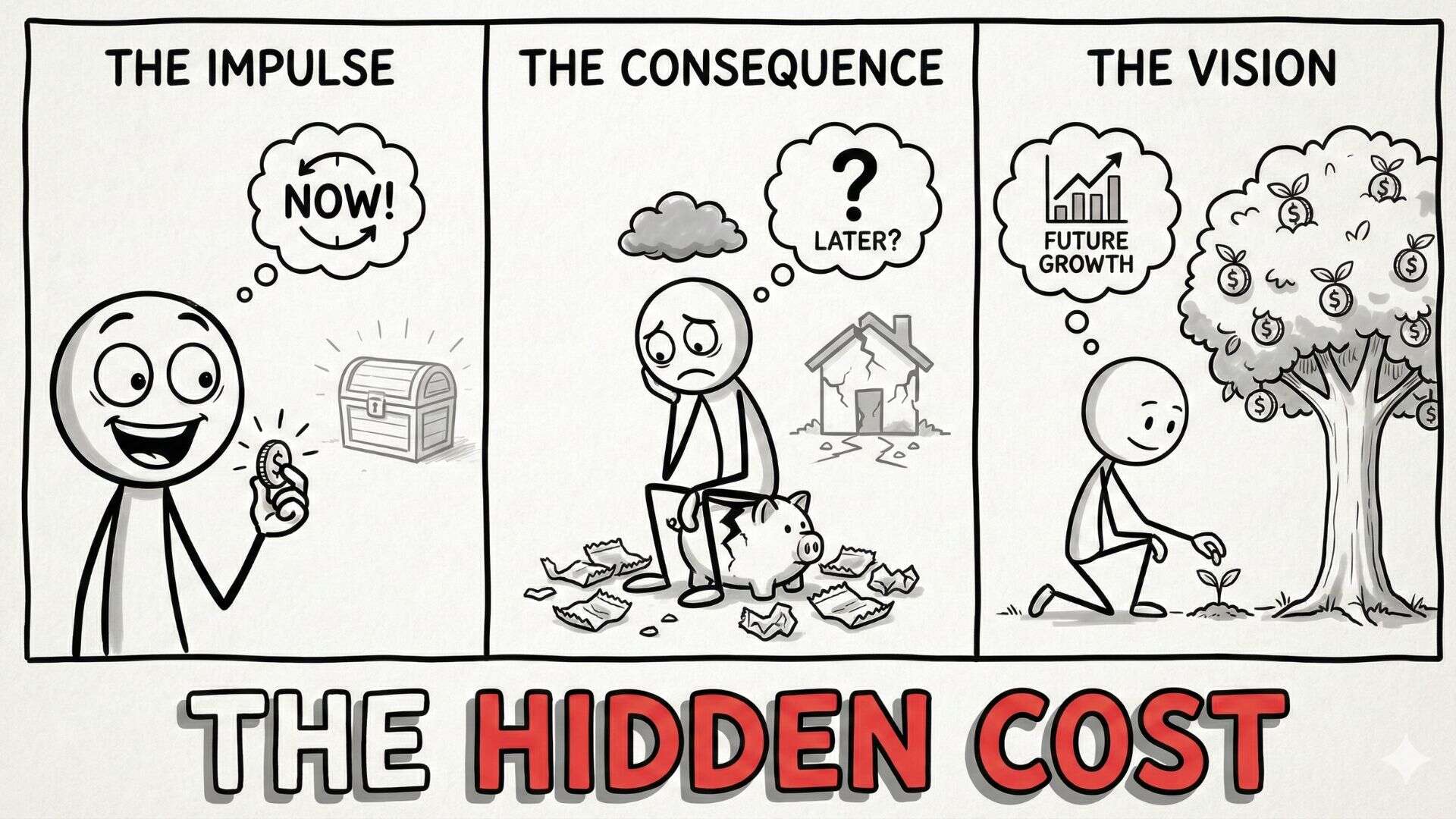

One of the primary differentiators of judgment quality is the time horizon of the decision-maker. In many institutional and professional settings, judgment is truncated by short-term incentives: quarterly earnings, annual performance reviews, or the immediate feedback loops of social media. This “temporal myopia” is a structural barrier to good judgment.

Most meaningful systems—be they career capital, compound interest, or institutional reputation—follow non-linear growth patterns that only yield results over long horizons. Short-term thinking often leads to “local maxima,” where a decision provides immediate gratification but impairs long-term viability. Good judgment involves the ability to resist these short-term pressures and align decisions with their second- and third-order consequences over years or decades.

This requires an understanding of “time-horizon arbitrage.” Because the majority of market participants are optimized for the short term, those who can maintain a long-term perspective gain a competitive advantage. They can afford to invest in foundational assets—such as deep expertise or high-trust relationships—that do not provide immediate ROI but compound into insurmountable moats over time.

Read also: The Hidden Distribution of Breakthrough Ideas

Feedback Loops and Calibration

Judgment is not a static achievement; it is a calibrated skill. In my analysis of expert performance, I find that the quality of judgment is directly proportional to the quality of the feedback loops available to the decision-maker. A feedback loop exists when the outcome of a decision is returned as input to the decision-making process, allowing for the refinement of internal models.

The difficulty in high-stakes strategy and investment is that feedback is often delayed or “noisy.” It is difficult to know if a success was due to skill or a favorable market cycle. Consequently, good judgment requires the creation of “synthetic” feedback loops. This involves keeping a “decision journal” to record the assumptions, probabilities, and emotional state at the time of a decision. When the outcome eventually manifests, the decision-maker can compare the reality to their original mental state.

This process of “calibration” is essential for reducing overconfidence. It allows the decision-maker to adjust their “priors”—their baseline beliefs—based on empirical evidence. Without rigorous feedback loops, experience does not lead to better judgment; it merely leads to more confident error.

Second-Order Thinking

First-order thinking is simplistic and superficial: “If I do $X$, then $Y$ will happen.” Second-order thinking asks: “And then what?” In complex systems, every action has a reaction, and those reactions have their own consequences.

I have observed that many “solutions” to complex problems create second-order effects that are worse than the original problem. For example, a company might cut its R&D budget to meet a quarterly earnings target (first-order effect: higher current profits). However, the second-order effect is a decay in the product pipeline, leading to a loss of market share three years later.

Good judgment requires the ability to map these causal chains. It involves identifying the “side effects” and “downstream consequences” of a decision before they occur. This is particularly important in systems characterized by “non-linearity,” where small changes can lead to disproportionately large effects. The structure of good judgment is thus rooted in the anticipation of the “unseen” consequences that short-term thinkers ignore.

Read also: What I Learned About Leading Without Having All the Answers

Incentives and Judgment Distortion

No analysis of judgment is complete without an investigation of incentives. As the adage goes, “Show me the incentive, and I will show you the outcome.” In my review of systemic failures, I have consistently found that poor judgment is often not a lack of intelligence but a response to misaligned incentives.

This is the “Principal-Agent Problem.” If a manager is incentivized based on a metric that does not correlate with long-term value, their judgment will naturally skew toward optimizing that metric, even if it is detrimental to the organization. This “motivated reasoning” is a powerful psychological force; it allows individuals to justify poor choices because those choices serve their personal or professional interests.

Good judgment requires an “incentive audit”—the ability to recognize when your own reasoning is being pulled by the “gravitational force” of a specific reward. It also involves designing systems that align personal incentives with long-term institutional goals. Judgment quality is not just a matter of character; it is a matter of environmental design.

Variance and Noise in Decision-Making

To improve judgment, one must distinguish between “bias” and “noise.” As Kahneman, Sibony, and Sunstein have argued, noise is the unwanted variability in human judgment. If two judges give different sentences for the same crime, or two doctors give different diagnoses for the same symptoms, that variability is noise.

In any complex system, there is a significant amount of “outcome noise” caused by randomness. Good judgment involves filtering this noise to find the underlying signal. This requires a statistical mindset: understanding the “base rate” (the typical outcome for a given situation) and recognizing when a specific result is merely a “random walk” away from that mean.

A structural advantage in judgment is achieved when a decision-maker can identify when they are in a “noisy” environment and adjust their confidence levels accordingly. In low-noise environments (like chess or simple physics), judgment can be highly precise. In high-noise environments (like macroeconomics or venture capital), judgment must be more humble, focusing on “not being wrong” rather than “being precisely right.”

Read also: Structural Mechanisms of Asymmetric Discovery

Compounding Judgment Over Time

The relationship between judgment and outcomes is multiplicative. A 1% improvement in judgment quality does not lead to a 1% improvement in life outcomes; it compounds. Each high-quality judgment puts the decision-maker in a better position to make the next judgment. This is the “Matthew Effect”: “For to everyone who has, more will be given.”

If an individual consistently makes decisions that are slightly better than the “base rate”—by reducing bias, thinking probabilistically, and considering second-order effects—the aggregate advantage over twenty years becomes insurmountable. This is the “Mathematics of Compounding Judgment.” Small, incremental improvements in decision process accumulate into massive disparities in terminal outcomes. The goal is not to be a “genius” in a single moment, but to be “consistently not stupid” over a long duration.

Why Good Judgment Is Rare

If the structure of good judgment can be mapped and analyzed, why does it remain so rare? The answer lies in the interaction of biological, psychological, and institutional barriers.

- Biological Inertia: Our brains are evolutionarily optimized for “System 1” thinking—fast, intuitive, and emotional. “System 2″—analytical and rigorous reasoning—is cognitively expensive and requires significant metabolic energy. We are “wired” to take shortcuts.

- Institutional Pressure: As noted, most modern institutions are designed around short-term cycles and narrow metrics that discourage second-order thinking and long-term horizons.

- The Ego Barrier: High-quality judgment requires a level of intellectual humility that is often at odds with the demands of professional “leadership.” To admit uncertainty and think in probabilities is often interpreted as a lack of confidence.

- Complexity: The world is becoming increasingly non-linear and interconnected, making the “latticework of mental models” even more difficult to maintain.

Good judgment is difficult because it requires a constant, active resistance to these pressures. It is an “unnatural” act that must be practiced with the discipline of an athlete or a scientist.

Read also: Why Winning in Business Is the Wrong Goal

Viewing Judgment as a System

The most significant analytical insight I can offer is that judgment should be viewed as a system of interacting components rather than a single skill. It is an engine where the “fuel” is information, the “gears” are mental models, and the “output” is a probabilistic assessment.

If any single component is flawed—if the feedback loops are broken, if the incentives are misaligned, or if the biases are unchecked—the entire engine fails. This systemic view allows for a more targeted analysis of judgment failure. When a decision goes wrong, we don’t just say, “The judgment was bad.” We ask: “Where in the system did the breakdown occur? Was it a failure of calibration? A second-order blind spot? Or a distortion caused by a short-term incentive?”

By treating judgment as a system, we can begin to “engineer” better outcomes. We can debug the process, upgrade the models, and calibrate the probabilities. This shifts the focus from “who” made the decision to “how” the decision was constructed, fostering a culture of continuous improvement in judgment quality.

Conclusion: The Architecture of Better Judgment

In the final analysis, good judgment is the ultimate structural advantage in an uncertain world. While effort and talent are necessary inputs, they are ultimately limited by the quality of the “governor” that directs them. High-quality judgment does not eliminate risk; it manages it. It does not predict the future; it prepares for a range of futures.

Better judgment emerges when we accept our cognitive limitations and build a structured architecture to compensate for them. This architecture is grounded in the math of probability, the logic of multidisciplinary mental models, and the discipline of long-term thinking. It requires a relentless focus on process over outcome and a commitment to honest feedback and calibration.

While the world remains inherently stochastic and noisy, the structure of our judgment is within our control. By understanding and managing the components of the decision-making system—probability, bias, time, feedback, and incentives—we can quietly and consistently shift the odds in our favor. Good judgment is not a gift; it is a discipline. It is the slow, cumulative work of building a mind that is calibrated for reality.